A new exhibition of AI-generated photographs is now on display in New York, at Gagosian. The artist is Bennett Miller, the Oscar-nominated director of Moneyball (2011) and Capote (2005). According the gallery, Miller’s project “…[engages] the history and format of photography to pose questions around the contingent and enigmatic nature of perception, reality, and truth—an enquiry made newly urgent by revolutionary innovations in computing.”

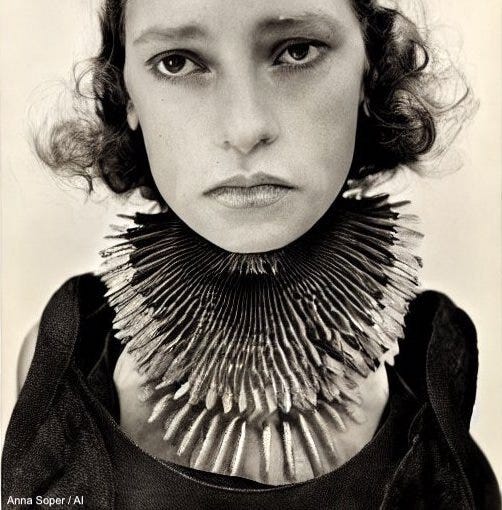

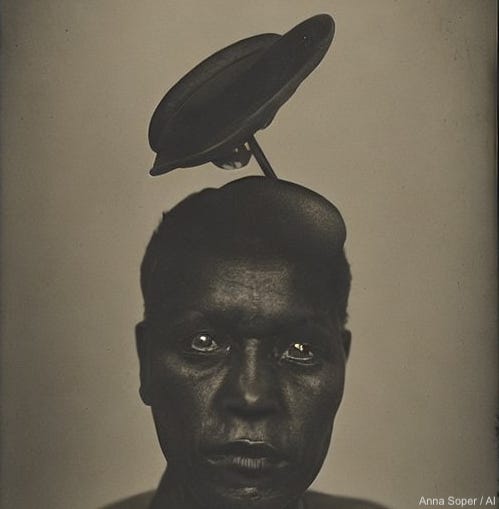

It’s not coincidental that I’ve been exploring AI image generation inspired by the history of photography. I’m sure many others are working in this area. But what is coincidental—if not a bit spooky—is that Miller’s results and mine look so similar.

Can you believe this??

When I saw Miller’s work, I sent a message to

, an artist and researcher I’m connected with on Twitter. He replied, “That’s really strange and confusing honestly...it’s weird to have such similar structures between the two works”.

He speculated that perhaps our prompts are similar; pulling the same levers in DALL·E 2’s and Stable Diffusion’s sizeable datasets. And he’s probably right. But what would inspire two separate artists to create the same sorts of images? That’s odd.

Both my work and Miller’s depict calamities, disasters, barren worlds and enigmatic bodies. In a review on Dirt, Terry Nguyen writes that Miller’s works “impart an eeriness upon the gallery”.

The images aren’t photographs because, to borrow Susan Sontag’s definition, there is no real experience or event being captured. There are no stakes attached to the subjects. Instead, they are amorphous vessels for a vibe, a fictive manipulation of feeling.

In other words, the vibes are off.

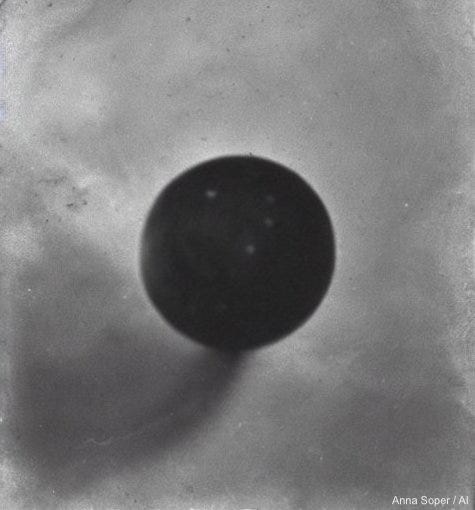

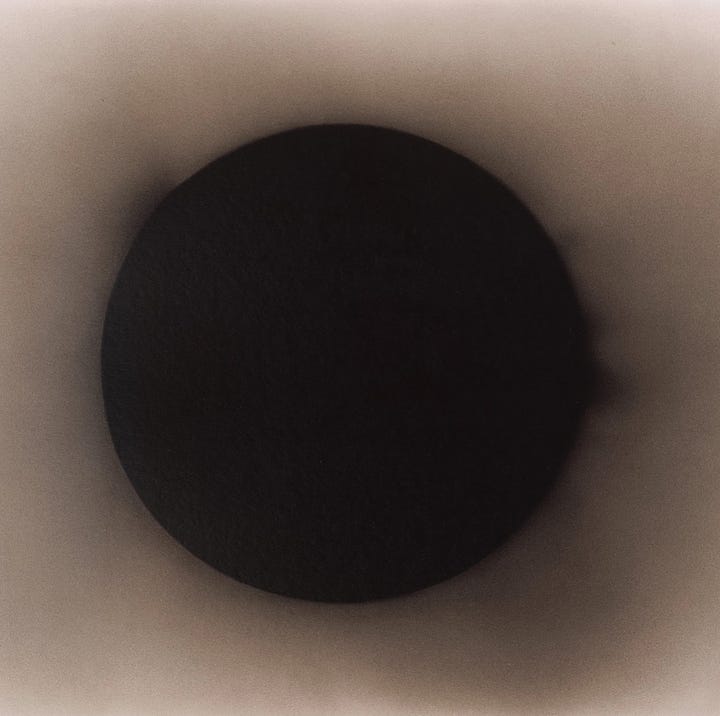

That’s the premise of a show I pitched in an open call in February. My project, Unnatural History, “challenges and troubles the concepts of veracity and verification in lens-based media”. The project features a series of unsettling images that convincingly depict unexplained atmospheric phenomena, viruses, biohazards, and other disasters. It’s meant to read as an exhibition of historical photographs—but is also meant to raise questions in the viewer’s mind. (“My gallery partner and I had the suspicion that something was off,” wrote Nguyen, of Miller’s inkjet prints.)

In essence, Unnatural History sounds exactly like Miller’s Gagosian show.

I made this image, Plume (Ohio), after the deliberate chemical release authorized in the wake of a train derailment in Ohio, in February.

I made another image, Blimps, Faint Atmospheric Lighting, UFO Aliens, last year, well before the spy balloon saga that gripped North America in February.

“Must be something about [AI] inspiring worst-case scenarios and dystopias,” I wrote the AI researcher. Perhaps this era—of disease, war, and political extremes—is the best and worst time for these technologies to have found a mass audience. Perhaps we’re projecting our deepest fears onto these tools. Perhaps we want to see what these tools can do, no matter how strange or perverse. And we do, that’s why people make celebrity deepfakes. There were wild “pictures” of Trump’s arrest in New York days before he showed up for his (ultimately uneventful) arraignment.

Like my series, Still Life, exhibited last year at Modern Fuel Artist-run Centre, Miller’s work links “the transformative power of the new technology with the dawn of photography and the birth of mechanical reproduction”.

Gagosian’s text adds;

With their sepia tones and uncanny, fugitive atmosphere, these oneiric works also draw on historical attempts to produce believable images of invented phenomena, including spiritualist photographs and Elsie Wright and Frances Griffith’s shots of the fictional “Cottingley Fairies.”

Once again, I’m left with the uncanny sensation that these words could describe my work, too.

In fact, here’s the artist statement I attached to my latest exhibition proposal:

In the nineteenth century, as photography became more prevalent, some photographers took advantage of this new medium (and its audience) by falsifying images through visual effects.

Some made portraits of psychic mediums apparently oozing ectoplasm. Others created photographs of people posing with fairies, or with deceased loved ones appearing as ghosts. The author August Strindberg erroneously claimed that the sensitized photographic plates he left overnight on a windowsill captured a faithful representation of the night sky.

In these cases, photographers played on the then-popular notion that this new technology could perceive and record phenomena that our own eyes could not. (They weren’t totally wrong: today, astronomers use cameras that capture light in wavelengths beyond the visible spectrum.)

Despite these examples, photography has long been considered as an objective, descriptive medium. That has changed with the rise of image editing software, augmented reality filters, and now, Artificial Intelligence (AI).

Unnatural History was created with the AI text-to-image generators DALL·E 2 and Stable Diffusion. These tools were made available to the public in 2022, and their prevalence raises valid concerns about deepfakes, misinformation, and intellectual property rights. That year, I exhibited a series of botanical images generated by AI. I uploaded these images to a plant identification app, seeking to classify them as real plant species.

Unnatural History is a continuation of that project—troubling the scientific and historical record through a series of “fauxtographs” and captions generated with AI.

Unnatural History’s unsettling images (of atmospheric phenomena, viruses, biohazards, and other disasters) evoke our collective experience of the past few years: a viral pandemic, domestic and geopolitical instability, and the ongoing effects of climate change—all seen through the lens of an increasingly fractured information and media environment, overpopulated by algorithms, bots, and corporate interests.

Coming soon to a gallery near you?

I’m not so sure of that now. On some level, my project feels redundant. What’s the future of AI-generated photography when it seems its visual vocabulary is so limited? Forget being able to tell an AI-generated photograph from a real photograph—who can tell one artist’s AI-generated images from another artist’s?

Can you?

I can’t seem to embed Tweets in Substack posts (for Reasons, no doubt), so here’s the text of a Tweet I wrote last November.

“It’s fascinating to me that so much of the #AIart space is focused on the future. Cities of the future. Humans of the future. Culture of the future. When I work with #AI, I’m working with the past: back to the dawn of photography, for example; in dialogue with what came before.”

I thought, perhaps, that I was an outlier in this space.

But:

Miller, says Gagosian, revisits the history of photography through an “embattled contemporary lens… [occupying] the unstable territory that is already becoming our home.”

Same.