There’s something uncanny about AI-generated images. They appear unfixed and loose; on the verge of morphing into something else entirely, scattering back-lit pixels into a jump-scare that hasn’t yet jumped. Will it jump? Will it scare? If so, when? That’s where the tension lies. They arise on screen like bad CGI from a 2002 Doctor Who episode, glitching into a warped—sometimes faintly monstrous—facsimile of the real thing.

Similarly, AI-generated “photographs” hum with inconsistencies. Even the framing feels off: off-centre, out-of-focus, bordered on only three of four sides.

In other words, it’s the perfect tool to explore and replicate the atmospheric photographs created by Cy Twombly, the American abstract expressionist artist.

Known for his monumental paintings, Twombly also worked on a smaller scale; on paper, in sculpture, and with photography. While his paintings have been compared to “armadas of barges at sea; chariots of color; exoduses,” Twombly’s Polaroids were once described as “a poetic dialogue between the anatomy of moments and their fading…”

Twombly knew this to be true: moments fade out, but a Polaroid fades in.

Through his Polaroids, Twombly captured intimate views of domestic subjects; a lemon, a bunch of peonies, a shaft of sunlight cutting through an open window.

“To my mind, one does not put oneself in place of the past; one only adds a new link,” said Twombly. So, in that spirit, let’s add a new link.

Recently, I began working with Stable Diffusion, a text-to-image generator similar to DALL·E 2 and Midjourney. From my simple prompt (“cy twombly polaroid”) Stable Diffusion generated quite convincing interpretations of Twombly’s lens-based work.

Here, Stable Diffusion mimics Twombly’s signature motifs: columns, canvases, and bunches of flowers. Some of these images even resemble his paintings. Blossoms gather in the upper corner of the frame, leaving a long, trailing line bleeding downwards. The colours are generally muted, closely resembling a Polaroid’s milky surface, and that spectral sheen as the film emerges into the open air.

“All the photographer had to do was watch and wait for the image to resolve itself,” said Marta Braun, of Twombly’s preferred camera, the Polaroid SX-70. With Stable Diffusion, DALL·E 2, Midjourney, and the others, that’s all you have to do, too.

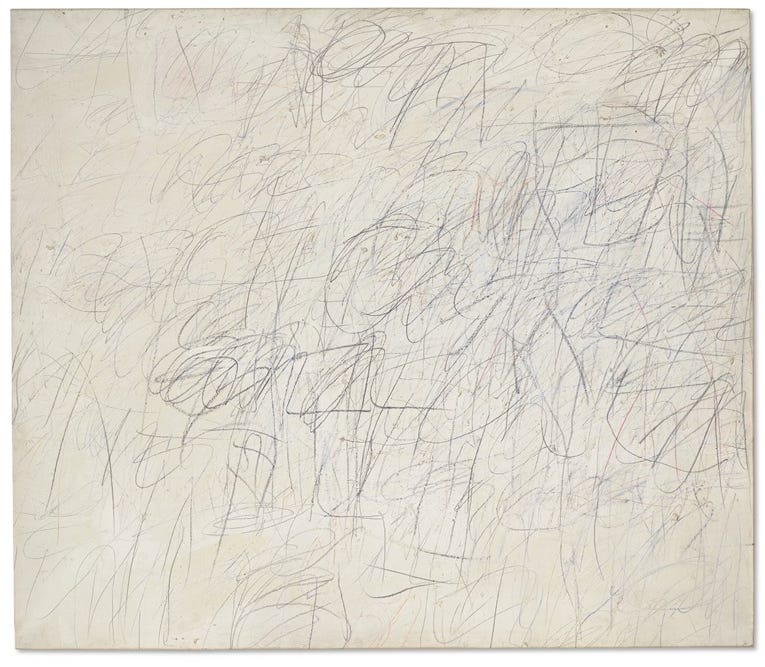

In the 1950s, Twombly began to experiment with automatic drawing; allowing his subconscious mind to direct the flow of his pencil. “Pencil is more my medium than wet paint,” he said, preferring neat lines to “mess”. Twombly was inspired by lettering, literature, mythology, and even his own handwriting, and he spent his career turning indecipherable scrawls into compelling abstract forms.

It’s fitting, then, that Stable Diffusion and its peers rely on written code; a language of steps, scales, and seeds that roil into these luminous (yet unnerving) emulations with the click of a button.

Of his process, Twombly said:

"My line is childlike but not childish. It is very difficult to fake... to get that quality you need to project yourself into the child's line. It has to be felt." - Cy Twombly

It is very difficult to fake.

Or is it?

Here’s what I think: on a purely technical level, it’s not so difficult to fake. Deepfakes go viral almost every day. But you (still) can’t fake artistry.

Next time, I’ll talk about Still Life, the new body of work I’ve created with DALL·E 2 and DALL·E mini (now called Craiyon). Until then, why not share this post?

Very beautiful, all of them, I’m still learning what your saying because I’m only an art novice (though I am an electronic engineer and not a bad photographer)

Keep up the great work, I love your cross discipline expertise! thank you Anna have a great day...Steve h